According to World Health Organization, about two to four of every 1,000 people in the U.S. are “functionally deaf,” with the majority of them aged 65 years or older.

More than 70 million people worldwide are deaf, with more than 80% of them residing in developing countries.

More than 1,700 babies in 2020 were estimated to have been born deaf.

Deafness not only affects the individual, but also their family, their friends and even the economy. The Deaf and hard of hearing can communicate between one another. The rest of the people also have their spoken and written languages for communication. However, not everyone knows American Sign Language or even ready to learn it. How can we make it easier for the Deaf and hard of hearing individuals to communicate with hearing individuals in order to become more connected.

how can we become a more inclusive society?

How can we establish better communication with Deaf and Hard of Hearing individuals? How can we make it easier for the Deaf and hard of hearing individuals to communicate with the rest of the people in order to become more connected?

My initial big vision for this project was to invent a pair of gloves with motion sensors on each digit that are detected by a camera on the phone or computer. The hand gesture can be converted to text in real-time. The hand gestures can be customized or selected depending on the need. This can be ASL, BSL, or simply any hand-gestures we set up.

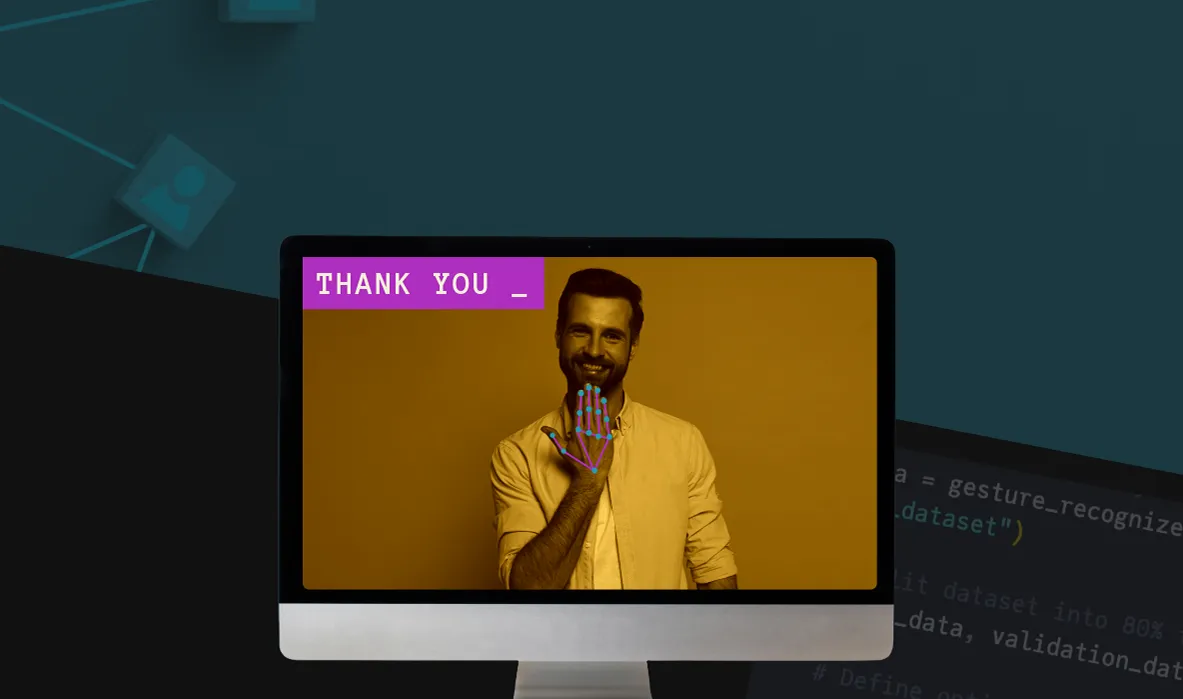

My initial prototype goal was to create a basic application that converts hand-gestures of Numbers from 1-10 to text on the screen in real-time.

previous related projects

In my initial research, I found that there has been a number of media art projects involving motion detection, hand and body gestures. Most of those were for visual art purposes, or for device control. Also I’ve seen gloves with motion-sensors used in music to simulate playing instruments. Imogen Heap was one of the first artists to experiment with that. Ariana Grande also used similar gloves to play music live.

After doing more research, I came across more projects that were/are related more to the same scope of my project. Here is a a non-inclusive time-line of some of these projects:

Ryan Patterson of Grand Junction, CO invented an easily transportable tool for translating sign language and communicating with the deaf when he was in high school. Now an aero-space engineer, he talks about the process of inventing. (2009)

See how Kinect's sign language translation capabilities help the hearing and the deaf communicate. A project by Microsoft Research (2013)

MotionSavvy, the San Francisco startup working on tech to help the deaf communicate, has launched an Indiegogo campaign to commercialize its first product, UNI. The UNI app works with Leap Motion technology to translate each sign from American Sign Language into audible words on a tablet. Sarah Buhr gets an inside look at MotionSavvy's new product. (2014)

University of Washington undergrads Tommy Pryor and Navid Azodi have created talking gloves technology to help the deaf and mute communicate with the hearing world. The prototype -- called SignAloud -- won a $10,000 prize from the Lemelson-MIT program, which celebrates outstanding inventors.(2016)

Easy Hand Sign Detection | American Sign Language ASL | Computer Vision Murtaza's Workshop - Robotics and A (2022)

Thoughts, Challenges, and Decisions

I had the opportunity to connect with a few people from the Deaf and Hard-of-Hearing community. It was very useful to gain insight into what they, and many others they know from the community, think about past projects. I wanted to know more about their experience with such technologies and the effect (if any) they had on the community.

Based on my interviews and the feedback I received, there seems to be a general skepticism toward these types of projects, largely due to past “failures”—such as the talking gloves—that were developed without meaningful input from the Deaf community and ultimately proved to be impractical. The core issue is that these products focus solely on hand signs while overlooking crucial aspects of sign language, including body movement, facial expressions, and cultural references within the Deaf community. Another major reason the Deaf community has rejected these gloves is the underlying assumption that Deaf individuals should adapt to the standards of hearing people. Why should a Deaf person be expected to wear gloves to "translate" their language when a hearing person could simply take the time to learn some basic signs? Also, after doing more research, it seems that many of the projects and prototypes that came out in the past 20 years (some of which are included above) didn’t develop into products that were deemed useful by the Deaf and Hard of Hearing communities. This is the case, especially, for projects that involve gloves and extra hardware and wires.

More projects are still made in the exploration of computer vision and how we can take advantage of it in many applications. Therefore, I decided to steer away from the idea of signing/talking gloves, and focus on experimenting with computer vision . At least if this project doesn’t end up being useful for sign language, I would still gain enough knowledge and experience that allow me to adapt the project and code to other applications like emotion recognition, body language analysis, facial expression to emoji, or even personal art projects .

Nothing in this project is considered a waste of time. This is one of those projects that I can say with confidence (get it? Machine learning, confidence?... anyway..) that it is not the destination, it is the journey that matters . Knowledge gained during this journey will open up paths to more destinations.

Now, let’s get to it…

Tools, Software and other Materials I used:

Python 3.9.21

Google Colab

Microsoft Visual Studio Code

MediaPipe

MediaPipe Model Maker

NumPy

OpenCV

scikit-learn

TensorFlow

MacBook Pro 2020

Logi 1080p Webcam

Compatability Issues:

There are several packages needed to proceed with this project. Each package had many versions: some depricated, other ones older, and some recently updated. It took quite long to find versions of packages that work together with no errors. So I decided to share here the packages and their versions. I’m sure this is not the only combination that works. However, this is what eventually worked for me.

| Package | Version |

|---|---|

| keras | 2.15.0 |

| mediapipe | 0.10.21 |

| mediapipe-model-maker | 0.2.1.4 |

| numpy | 1.23.5 |

| opencv-contrib-python | 4.11.0.86 |

| opencv-python | 4.11.0.86 |

| opencv-python-headless | 4.11.0.86 |

| scikit-learn | 1.6.1 |

| tensorflow | 2.15.1 |

| tensorflow-addons | 0.23.0 |

| tensorflow-datasets | 4.9.3 |

| tensorflow-estimator | 2.15.0 |

| tensorflow-hub | 0.16.1 |

| tensorflow-io-gcs-filesystem | 0.37.1 |

| tensorflow-metadata | 1.13.1 |

| tensorflow-model-optimization | 0.7.5 |

| tensorflow-text | 2.15.0 |

| tf_keras | 2.15.1 |

Main Steps

I chose Python for this project because of it’s relatively easy, and has a wide variety of useful packages and libraries especially for machine learning and AI projects. Also, working in Python allows you to write, run, and debug your code in a notebook using Google Colab. This gives you access to more resources and hardware that can reduce hours of model training and testing that would, otherwise, take much longer and put a heavy load on your system if done locally.

Build the model

Below are the main steps/tasks I did to build, train, and test my model:

Install packages needed in the project.

Load data from ASL gesture dataset.

Create and train the model.

Evaluate the model.

Export the trained model.

Detect & Recognize Hand Gestures

Initialize MediaPipe

Load the trained model

Detect gestures using webcam and MediaPipe

Recognize gestures using the trained model

Convert & Display Hand Gestures as Text

Design the user interface

Convert hand gestures into text

Display text on Screen

Test and Iterate

Test the application

Document any changes/improvements needed

Backup, make changes, and test again.

Version 1.0

I have successfully managed to detect, recognize and convert hand gestures of numbers into text on screen. This gave me a push to add more features to the application. I retrained the model to recognize all alphabet letters except "J" and "Z" which require a moving hand gesture. The model had also some trouble recognizing the gesture for "R".

After training, validating and testing the updated model, I made major changes to the user interface of the application. Instead of just recognizing and displaying number one by one, I turned the app into a small game where a series of numbers and letters are displayed, and user should make the correct corresponding hand gesture for each. Here is the updated version: